If you use AI to create, here's what the new rules mean for you

$1.5 billion, 11,500 consultation responses, and one opt-out you probably haven't activated

If you create anything for a living and you use AI tools to do it, you need to know what’s happening with regulation right now.

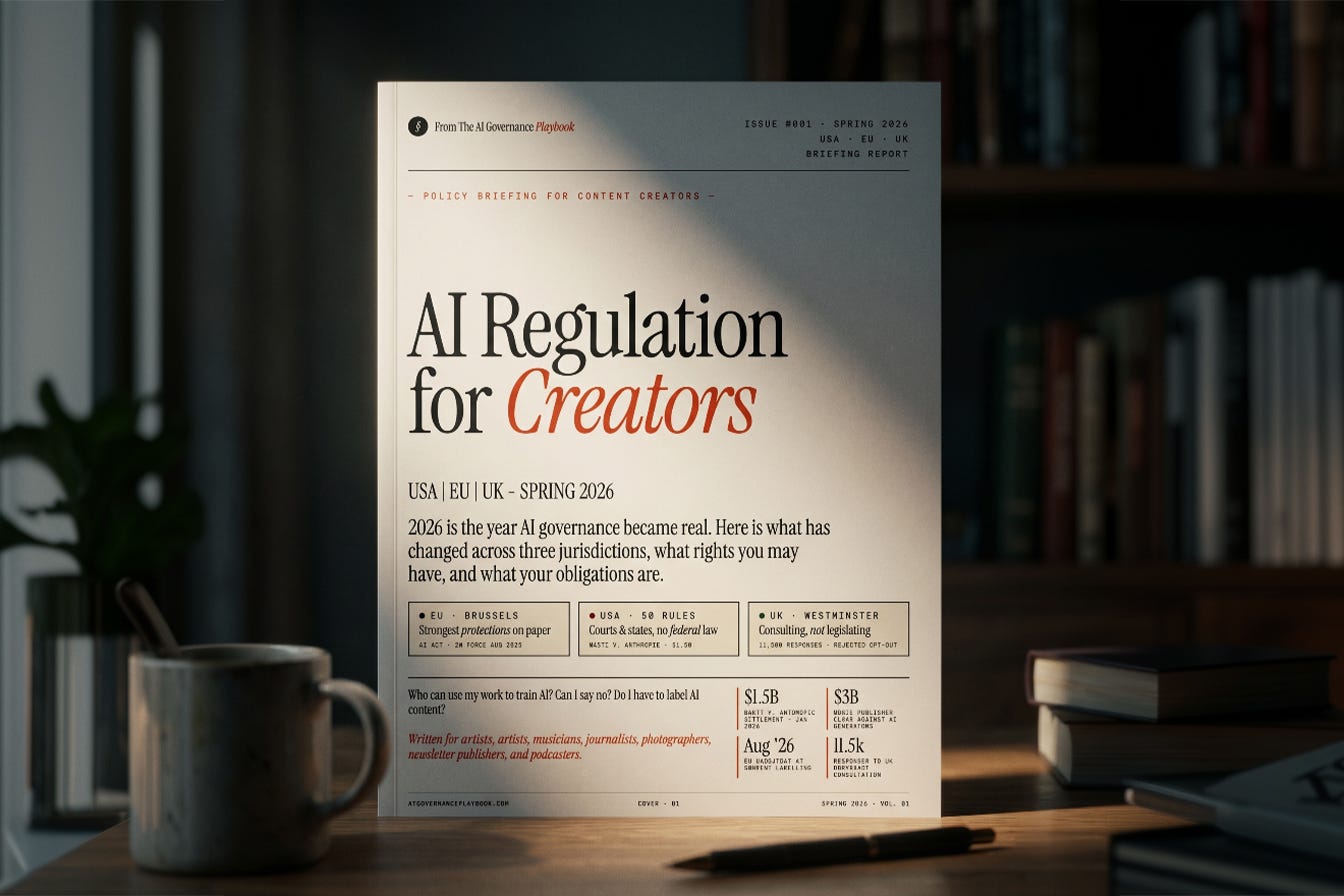

I published a free briefing this week over at the AI Governance Playbook. Eleven pages covering the EU, US and UK. What’s in force, what’s coming, and what actually requires action on your part.

A few things that will likely surprise you. The EU’s opt-out system for AI training data puts the responsibility entirely on you. If you haven’t published a machine-readable rights reservation, EU law currently allows AI companies to train on your public content. The opt-out exists, but you have to activate it.

In the US, the $1.5 billion Bartz v. Anthropic settlement in January drew a line that changes the calculation for every AI company acquiring training data. Pirated sources are no longer a fair use defence. That ruling has ripple effects for creators whose work appeared in shadow libraries without their knowledge.

The UK ran what may be the longest copyright consultation in recent memory, got 11,500 responses, then rejected the opt-out model it had been developing for two years. No new law yet. A market pilot instead.

There’s also a cross-border checklist on page 10 with eight actions you can take today, most of them free, all of them worth doing if you publish anything online.

Download it free!

DISCLAIMER